Venkateswara Rao Muttireddy, an expert in AI technologies, writes a special article for DM about why and how security breaches happen despite Testing.

Security failures rarely begin with malicious intent or sophisticated attacks. They begin with confidence. Specifically, the confidence that comes from hearing the phrase, ‘It worked in testing.’ In controlled environments, systems behave as expected. Inputs are clean, access is limited, and processes are followed exactly as designed. Real operations are nothing like that. Once a system goes live, it enters a space shaped by human behavior, shifting priorities, and integrations that were never fully mapped.

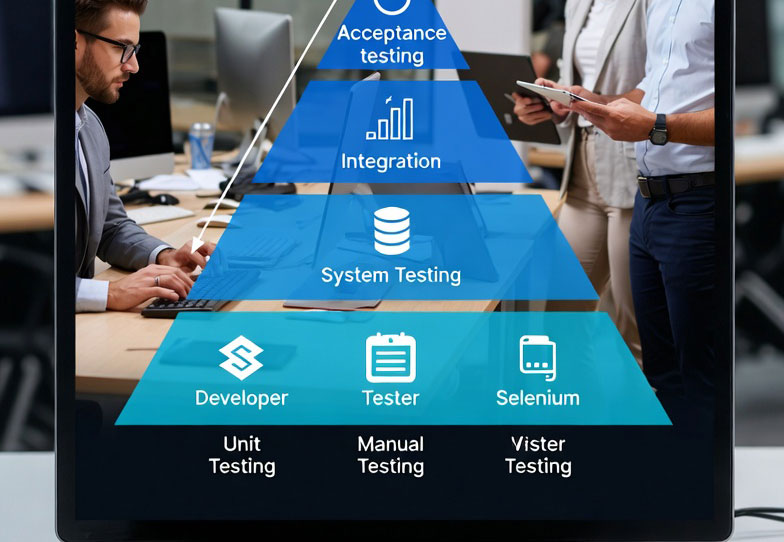

One of the most common causes of breaches is human adjustment. Teams under pressure make small changes to meet deadlines or keep services running. Temporary access becomes permanent. Monitoring steps are skipped to save time. Credentials are shared ‘just this once.’ None of these actions feel dangerous on their own, but together they create exposure that testing never accounted for. Process gaps amplify the problem. Many organizations test individual components but rarely test end-to-end workflows under realistic conditions. Security checks may pass for one system while failing at the handoff between systems. When ownership changes across teams, assumptions replace verification. What was secure in isolation becomes vulnerable in combination.

Integration blind spots are where most defenses break down. Systems are connected incrementally over time, often by different teams and vendors. Each connection introduces new behavior. Logs may not align. Alerts may not propagate. Access controls may conflict. These issues don’t appear in test environments because the full ecosystem is rarely reproduced.

Another overlooked factor is the gap between documented processes and actual practice. Policies exist, but work gets done differently. Security teams protect what they can see. Breaches exploit what sits outside that visibility. The longer systems operate without revalidation, the wider that gap becomes. The trade-off organizations avoid discussing is convenience versus discipline. Testing favors predictability. Operations demand flexibility. When flexibility grows without equal attention to oversight, exposure follows.

The lesson learned across incident reviews is consistent. Security does not fail in testing; it fails in transition. It fails when systems move from controlled scenarios to real-world use, where people adapt faster than processes evolve.

Organizations that reduce breaches focus less on perfect test results and more on continuous validation. They test integrations, observe real behavior, and assume change will happen. Security improves not when everything works in theory, but when it holds under pressure.